The 10 commandments for vibe coding / agentic coding

Raising the bar so it's production-ready

Since the launch of GPT-4, I’ve spent an intense and insane amount of time coding with AI. It’s been a wild ride, and I’ve learned a ton. In this post, I want to distill everything I’ve learned from this experience into 10 practical rules – my 10 commandments for vibe coding.

What is vibe coding?

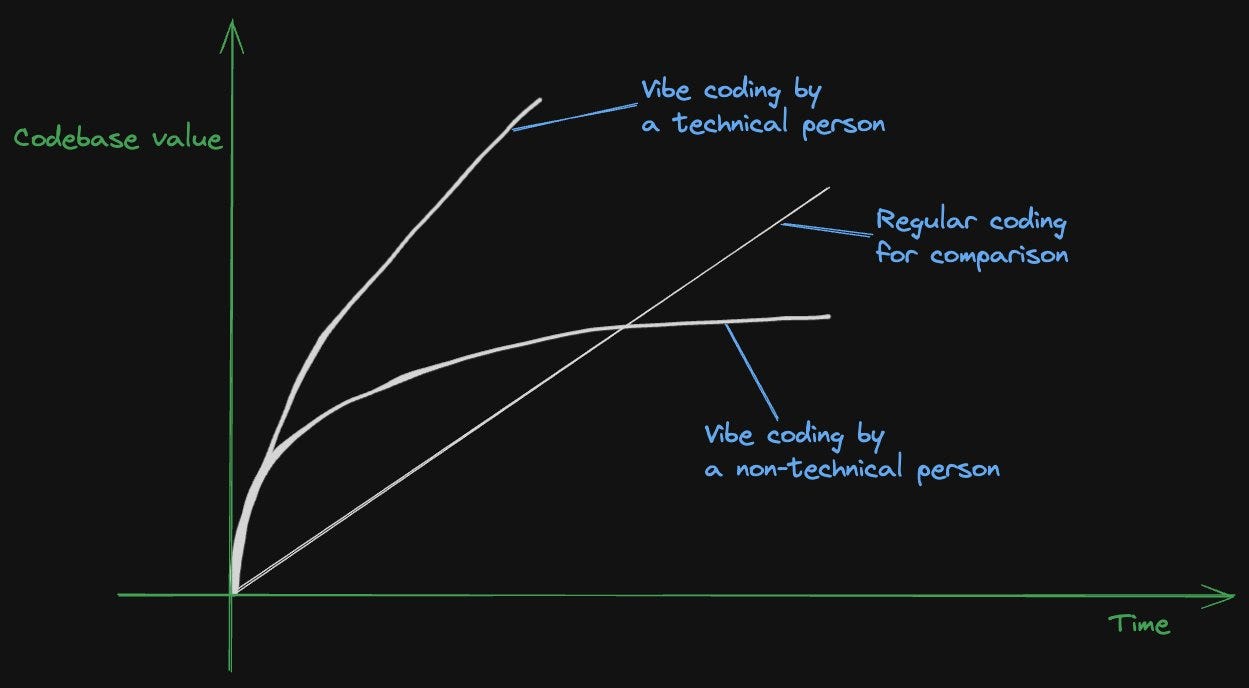

Well, you might say all coding with LLMs is vibe coding, but I don’t think that’s quite the case. The way I understand it is that vibe coding is a form of coding that started as more casual coding, where you ask an LLM for pieces of code or blocks of code, and then you don’t necessarily check every single line. If you check every single line, that’s more methodical. I don’t think that’s just vibes-based, so I don’t consider that vibe coding.

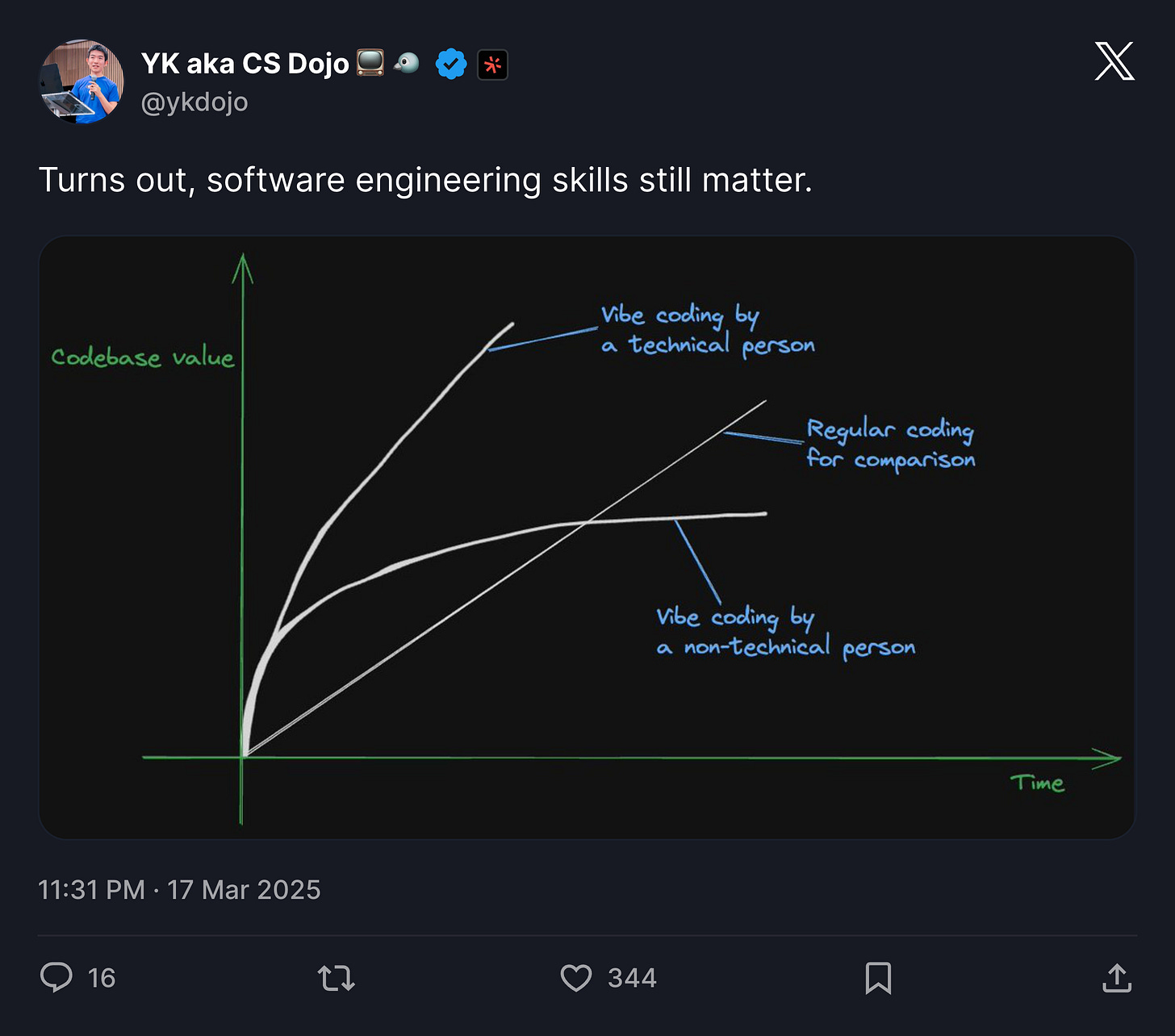

Even though it started as more of a casual activity, I believe that it has the potential to become something more professional, something more skill-based, just like regular software engineering is. That’s why I wanted to dive into this topic, so that I can raise the bar for vibe coding and basically turn vibe coding into something more serious, something production-ready.

So, let’s dive into this list.

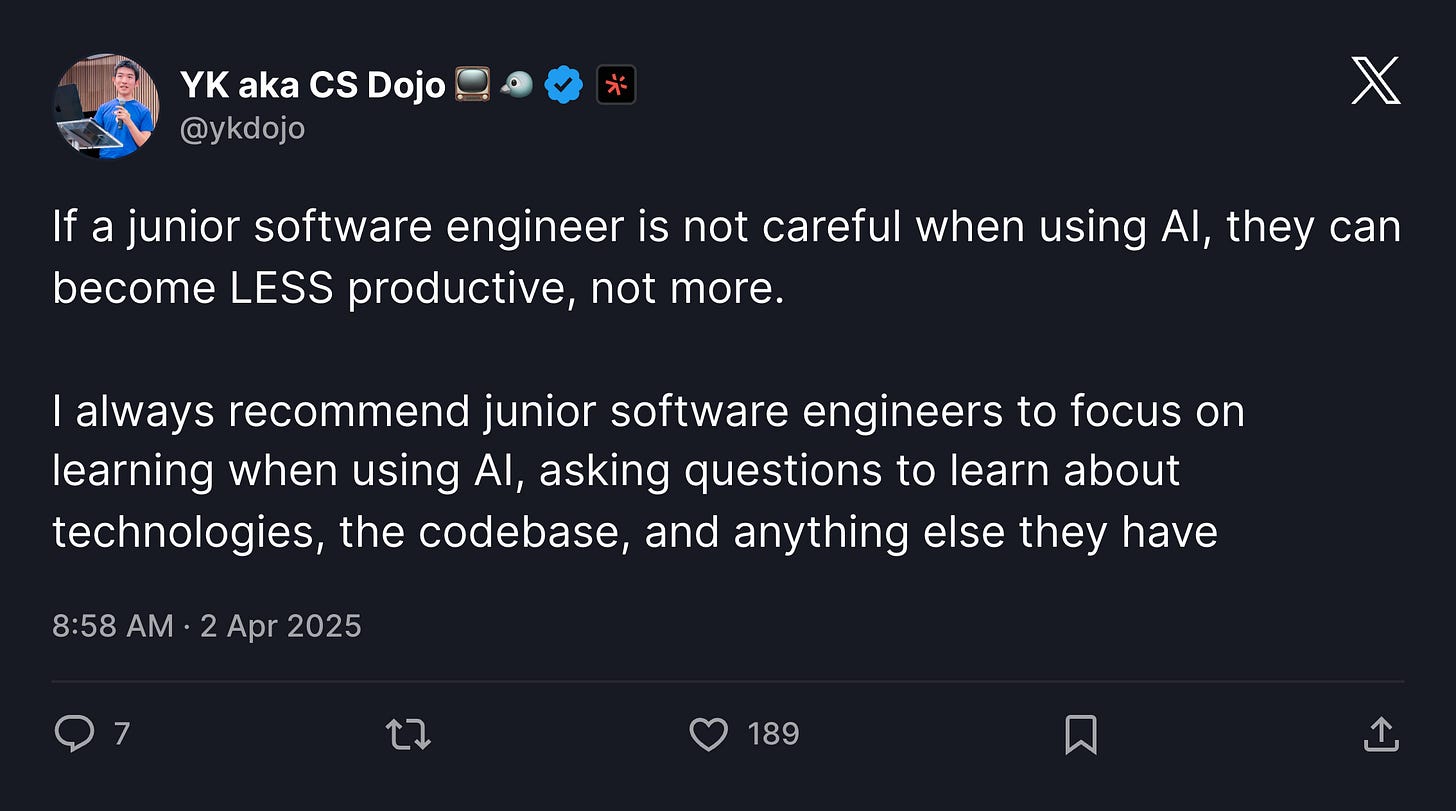

1. Thou shalt not rely on it too much if you are a junior.

I say this because, if you’re a junior, you don’t know what you’re doing yet (no offense intended). You might make a lot of mistakes if you try to reach for something that you’re not ready for yet. You might make architectural decisions that are actually really bad. And no matter how much code you produce, no matter how high-quality individual pieces of code are, if you make major mistakes like that, it’s going to be very, very hard to recover from that mistake.

The best way for a junior to use these LLMs, in my opinion, is to understand something or to learn something. You should always start with high-level questions like, “tell me about this framework,” or “what is the difference between this framework and this other framework,” or “this language and this other language.” You can also ask questions about your codebase by saying stuff like, “Tell me about this codebase,” or “What does this part of the codebase do?” Try to ask a lot of questions without writing a lot of code to understand everything you need to know. This process is the same as before these LLMs were introduced. But with the introduction of advanced LLMs, this process has become much faster, and you can learn things much faster.

Even as a senior software engineer, it’s important to be careful when generating code with LLMs, but it becomes extra important with juniors, because it’s just easier for them to make major mistakes, like major architectural mistakes.

Also, keep in mind that everyone can be a junior depending on the context and domain. For example, if I’m working with Rust, I know next to nothing about it, so I’ll be more careful than usual when working with AI, and I’ll be asking more basic questions. It’s just a matter of the specific domain you’re working on and the proficiency level and amount of knowledge you have in that particular domain.

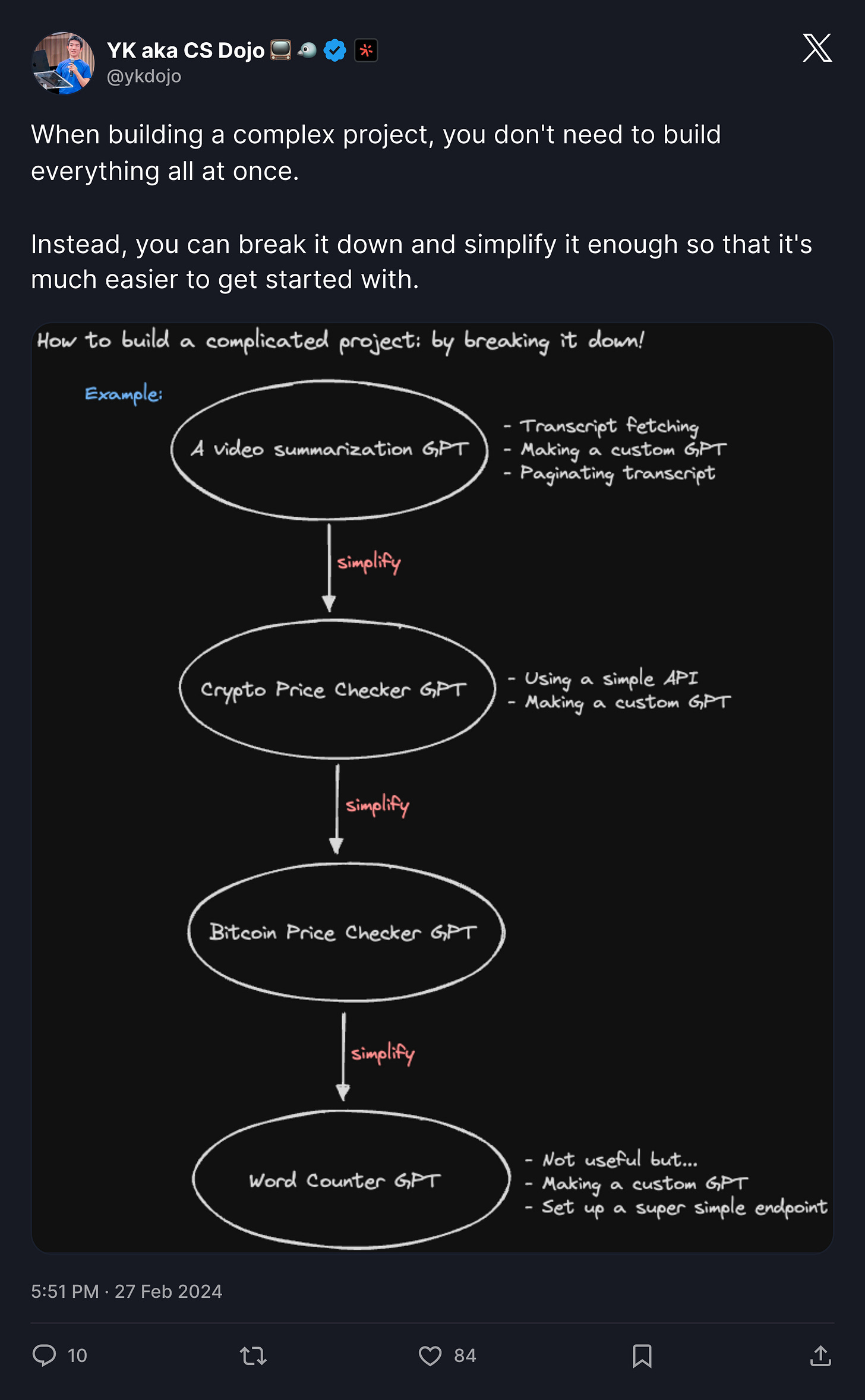

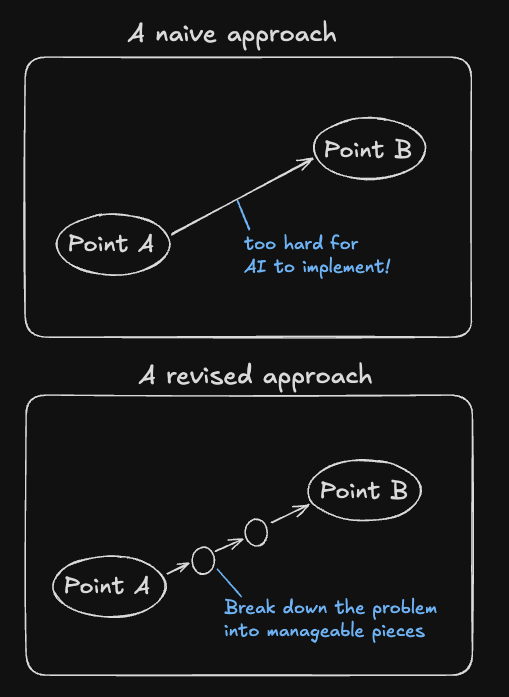

2. Thou shalt break down a large problem into smaller ones.

Even before AI, a big part of the job of a software engineer was to break down large problems into smaller ones. This is no different in the age of AI.

Here’s an example: a colleague of mine was trying to create a system that collects data from an API and then shows it in a dashboard. So it has a lot of components: the front-end part, the data processing back-end part, and interacting with the API. That’s a lot to deal with for AI, even with advanced ones. So what I would suggest for a case like that is, first, make sure it works with fake data. It might be small fake data, like fake CSV data – a small piece of representative data, so that you can focus on the UI part of it first. Make sure that works. And then you can think about, “OK, how do I interact with the actual data? Do I download stuff manually? Or if I want to automate it more, can I use an API to automate that process?”

When you break down a problem like that for AI, it becomes much easier for AI to handle. Similarly, if you ask AI to do something, solve something, if it’s too hard for it, then you can ask AI to even break it down for you by itself.

If it’s a large migration project or large-scale change that you want to introduce to your codebase, first branch out to a new branch if you’re using Git or something similar. Then ask AI to create a plan for it. You can create a detailed plan, put it in a document, review it together, and make sure it’s in an updatable format so that AI can update the document itself as it makes progress.

You can ask AI to make changes by itself, committing at each point and testing every single change. If you feel it’s making too many changes all at once, you can ask it to break down further. For example, if it needs to make 100 changes and it’s making 20 changes at once, and it’s not working, you can ask it to make just two or three file changes at a time so it’s able to test everything properly.

3. Thou shalt write lots of tests.

For this one, the fundamental thing I noticed is, first of all, tests are helpful in general with or without LLMs. They help your project become more stable. They help you catch bugs earlier than you would otherwise. Writing tests has always been around and it’s always been a good practice in general.

But humans tend not to like to write tests. It’s not a very enjoyable activity for a lot of people, for a lot of software engineers. With agents though, with AI, they don’t have any problem writing tests. They’re not going to complain about writing tests (as long as you ask it nicely, I guess).

Writing tests becomes more important as AI tends to still make a lot of mistakes when they write code. And if you don’t read all the code you generate with AI, how can you make sure the quality is good? Well, it’s by writing tests, but specifically by having AI write tests.

You might say, okay, that’s not very good because if you have AI write tests, then how do you know the tests are good themselves? Well, here is the way I think about it. First of all, even if the tests are not perfect, even if they’re, let’s say, 70% accurate or even 30% accurate, as long as they’re somewhat useful and somewhat accurate, you are testing some of the right things. I see it like a shotgun approach. Even if you don’t hit the target right away, if you write more tests, then eventually you’ll hit it. Even if you’re using a shotgun, you’ll just need to basically fire more.

The second way I think about it is you can quickly glance at the tests the AI has created to make sure it doesn’t have any obvious flaws. And on top of that, you can ask, “Okay, did you test this thing? Did you test this other thing? What are these tests doing exactly?” And that way you can make sure that the AI-generated tests surpass a certain quality bar.

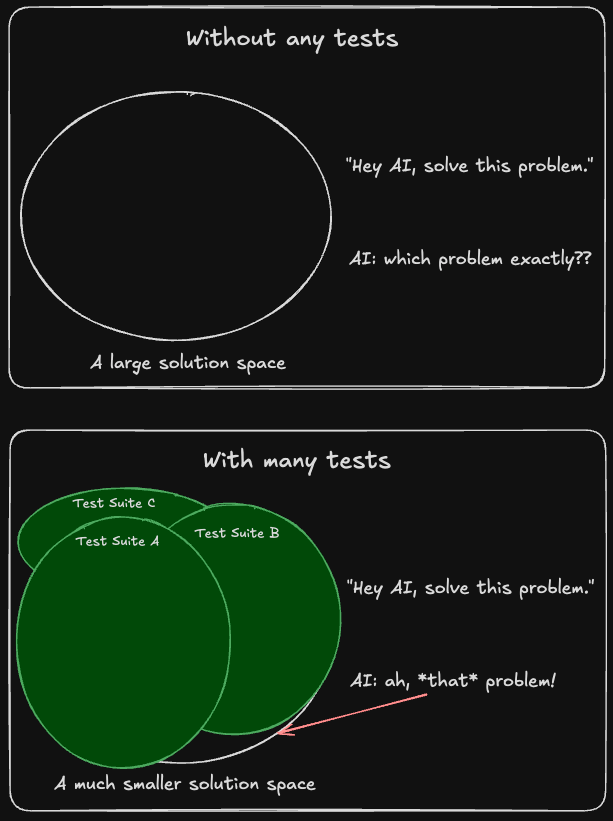

This is something I was skeptical of at first too. I didn’t think it would make sense for AI to write tests for code that it generated. How can it test itself? But it turns out it’s super effective. And I think part of the reason is because if you ask AI to write code or fix something, it doesn’t necessarily know what it needs to fix exactly. It has a large potential space to explore for potential code or solutions. But when you have it write tests first, you’ll know which parts of the code are working, the best practices it incorporated into the tests, the syntax it used. And that way you’ll have a much narrower space to search when it tries to create a solution or fix a bug. This is really surprising and I highly recommend everyone to try it.

Additionally, I see code as a liability, not an asset. Eventually, when you have a lot of code, it starts to slow you down. But tests are more of an asset because the more you have them, the better your agents and AI will be able to perform. Other practices like refactoring your code are also important, but I think the first step to make sure your codebase remains high-quality is writing lots of tests.

4. Thou shalt not exceed 400 lines of code for each file.

This is not a hard and fast rule per se, but I first noticed with GPT-4 and GPT-4 Turbo – the OGs of advanced AI models from a couple of years ago – that it is able to handle files that are 300 lines or less pretty well. It can write files of this length and edit them effectively. However, when files start to exceed that threshold – while it generally manages around 400 lines of code – once they reach 500-600 lines, it becomes less accurate and less capable of handling, editing, and creating these larger files.

So, the best practice is to keep each file under 400 lines of code. This is a rule of thumb, not set in stone, but worth keeping in mind. I’ve observed this limitation with Claude 3.7 Sonnet as well. While I’m not sure if it’s because of how these models are trained or their ability to process large data, it appears to be a consistent pattern. Some newer models might be able to handle longer files better, but keeping files under 400 lines is good practice anyway - with or without LLMs - for better code organization.

5. Thou shalt organize your codebase well.

Again, this is a good practice with or without LLMs, but this is particularly important with AI-generated code because you’re going to generate a lot of code, both tests and non-tests.

Whenever you add more and more code fast with AI, everything should be organized so that both humans and AI can find exactly what they are looking for. It shouldn’t be like spaghetti code; it should be well organized, well understood. There should pretty much always be one clear way to do something. If there is a complex path to accomplish a certain task, where you need to go from one part of the codebase to a totally unrelated part and then another totally unrelated part, that would be a huge miss and that wouldn’t be good for either humans or AI.

So it’s important to organize your codebase, and I think as we go forward, more and more of the software engineering job will be that: organizing your codebase, managing AI agents to write code for you, not necessarily writing everything by yourself.

6. Thou shalt move slow so that you can go fast.

This is also true of the pre-AI era of software engineering. Oftentimes, management has a goal to implement something or create a feature for their customer, and they try to push the engineering team too fast. As a result, some of the points I’ve already mentioned, like organizing your codebase and writing tests, get neglected. You tend to accumulate a lot of tech debt over time that way.

This becomes even more true with AI, again, with fast-generated, not-so-accurate code. So it is extra important to move slow so you can go fast over time. If you want to create a throwaway project or experiment, something really, really fast, like in a couple of days, I think that’s totally fine. It depends on the purpose. But if you want to create a long-living, maintainable codebase, it’s crucial to move slow, and you might need to advocate for this with your management if you’re in that situation. It is essential for management to understand this concept too.

7. Thou shalt make architectural decisions carefully.

Okay, number seven is similar to some of the points I’ve already raised. When you start a new project, pre-AI, it was important to make good architectural decisions. Post-AI, it’s the same thing. You might be able to write code fast, so you are able to move things fast and maybe run into issues earlier than you would otherwise.

But still, I like to think of it like you’re launching a powerful and fast rocket. Wherever you point, it goes fast in that direction. And when you have this powerful tool, of course, it’s important to point it in a good direction.

Now, luckily, this same tool – this AI agent, this AI assistant – is able to help you make better decisions about this. So whenever you first start a project, it’s important to ask architectural questions like, “Should I use this framework versus this other framework?” “What about this language versus this other language?” “What are the trade-offs?” “What questions should I be asking?” or “What concerns would you have about this project over time?” Asking a lot of questions at the beginning will help you make better decisions earlier so that you don’t end up wasting a lot of tokens, time and money going down the wrong path.

8. Thou shalt provide or let AI collect necessary context.

This might be an obvious one for some, but it’s not necessarily obvious for everyone. So, a good example of this is when you’re working with your codebase, obviously you want to provide all the files it needs, all the parts of the files that it needs to be able to make informed decisions about your codebase. If you’re asking it to change code, you might ask it to read specific tests or parts of specific test files so that it has more context about what’s working and what’s not working too.

Another one is with documentation. If it doesn’t have enough information about the latest version of APIs or the latest version of libraries or frameworks, then you can copy and paste the whole documentation or relevant parts of the documentation and then you can ask it to create something based on that.

You can either do it manually by providing the right context or you can let AI collect necessary context by itself if it has those agentic capabilities.

9. Thou shalt keep the provided context to a minimum.

While you want to provide enough context for AI to know exactly what it’s doing – about your codebase and documentation and all of that – you don’t want to provide too much context, because then it starts to get confused and hallucinate.

The way I think about it is, it’s like you’re looking at two people. One of them has a clean desk, not a lot on their desk, maybe a few things, a few important documents. The other one has so many documents on their desk, the desk is totally full. If you ask both of them to do something at the same time, chances are, the first person will be more focused, less distracted, and they’ll be able to get stuff done. And AI seems to be the same way, with the different models that we have.

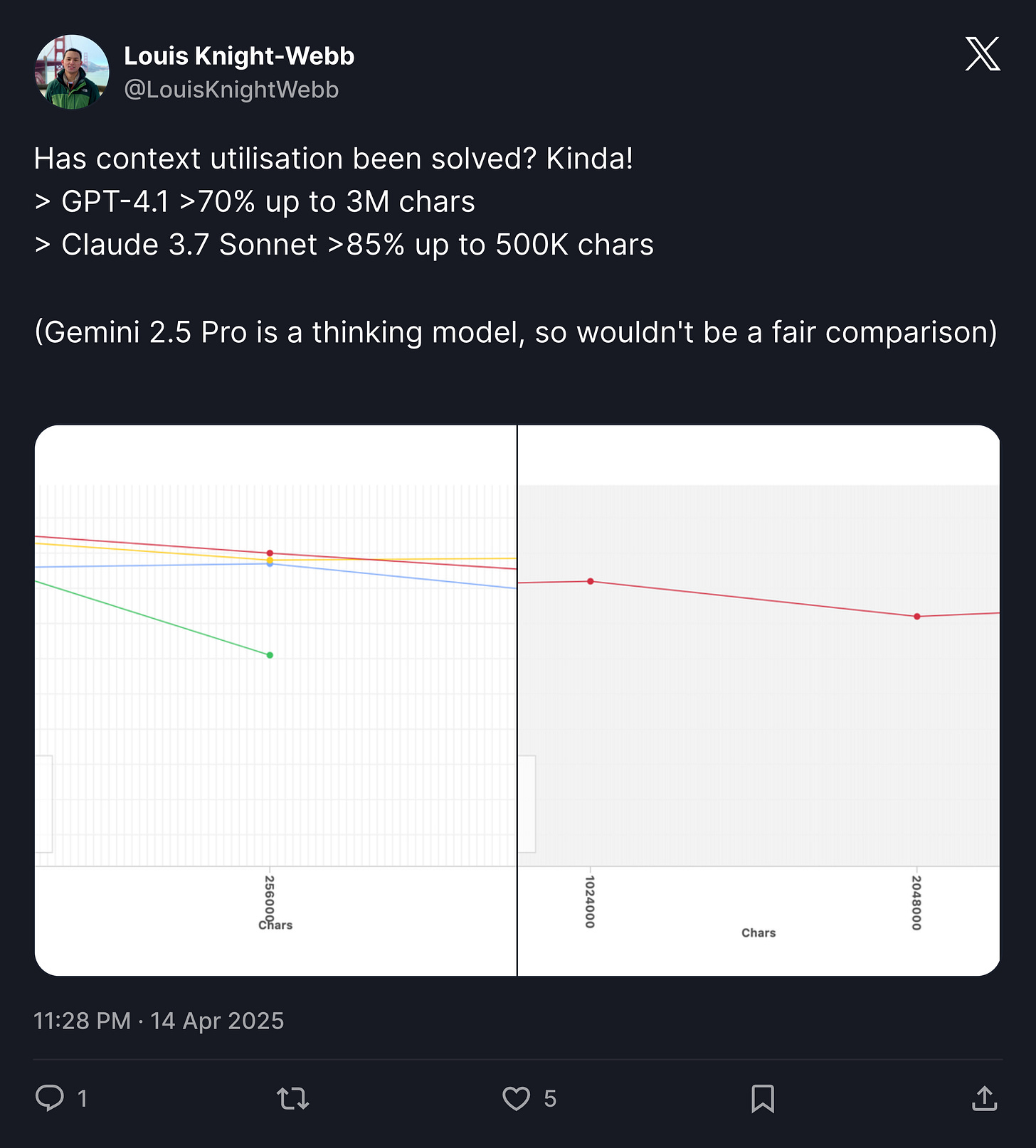

While recent models are getting better at handling longer contexts, there are still limitations. One way this shows up is that it sometimes helps to start a new thread if the conversation becomes too long, as the model might not be able to handle all the context from previous messages.

So it’s important to provide enough context, but not too much. And that’s going to help you save costs too.

10. Thou shalt go agentic.

Okay, the last one is something I referred to a little bit throughout this post, which is going agentic. What it means is that traditionally, AI would give you answers, but it wasn’t able to do stuff for you. But with agents, it is able to do that.

Essentially, with GPT-4+ level AI models, they have the ability to perform tasks. With recent models like Claude 3.7 Sonnet, it has the ability to plan things a little bit far ahead than was possible with previous models. So what that means is that it is able to look at files by itself and perform a series of searches to find what it’s looking for, as well as edit code. The latest Claude models in particular are great at editing code.

And it becomes a much better experience, from my experience, when you don’t have to copy and paste everything all the time, when you don’t have to instruct it to do every single thing. So I would highly recommend going agentic. You can either create a solution like that yourself if you want, or you can use an existing solution like Claude Code, Windsurf, or Amp.

11. (Bonus) Thou shalt create a containerized dev environment for your AI agent.

Commandments 1 through 10 are the crucial ones to get started and become proficient with vibe coding/agentic coding. Looking into the future, though, this is where we are going.

In the future, there will be more and more use cases like the following: you have a problem, you may or may not have test cases for it already. You want to solve it, but you don’t want to just have one agent running locally solving it. You want to have maybe five, maybe ten, maybe even a hundred agents running at the same time trying different solutions, trying to find the best one out of all of them.

This idea is called inference-time scaling and it was explored recently by OpenHands. For agents to be able to do that, the important thing is that each of them has their own dev environment. Probably the best way to do that is through a containerized dev environment.

You can put your whole repo in a container, and then you can spin up that container as well as another container with an agent running inside it together as a pair. You can have a shared volume between them so the agent can edit files in the dev environment container. We should also give it the SSH access so that it’s able to run commands within that container too.

That way, you’re going to become essentially a conductor of this fleet of agents, not just one or two, but 100, 200, maybe even 1,000. To me, that’s a really exciting future!

Essentially, we’ve been moving away from robotic fingers (autocomplete) to robotic arms (agentic coding/vibe coding). And now, to a fleet of independent robots that you will have the ability to manage.

Concluding thoughts: Towards the age of professional vibe coding

So, looking back at these commandments, a majority of them were true even before the age of AI. Breaking down problems into smaller ones (#2), writing lots of tests (#3), keeping files under a reasonable size (#4), organizing your codebase well (#5), moving slow so you can go fast (#6), and making careful architectural decisions (#7) - all of these were good practices even before AI entered the scene.

And now with AI, these software engineering skills remain as important as before, but there are also new skills you’ll need to acquire to effectively work with AI. When people hear “vibe coding,” that might sound easy, and it is easy to get started. But if you want to grow a codebase to a maintainable level, if you want to have a bug-free project that lives for a long time without hurting a lot of people’s interests with a major incident, then it is important to learn these things.

Alternatively, you can just refuse the idea that agentic coding will become serious. But I don’t think that’s the way the world works. Agents are here. They are becoming a more and more serious part of software engineers’ lives. And if you try to fight it, I think you’re essentially going to become a dinosaur.

I’ve heard that some software engineers, including myself, don’t open up the editor anymore the same way as before. The editor used to be the first place to edit code, but now it’s agents first. And I think most people in the world, most software engineers in the world are yet to see that. So if you’re early, you’re going to have an advantage.

If you want to learn more details about some of the points I’ve raised here, as well as more lessons for agentic coding with discipline and skill, feel free to subscribe to this newsletter.